MLflow Models

An MLflow Model is a standard format for packaging machine learning models that can be used in a variety of downstream tools—for example, real-time serving through a REST API or batch inference on Apache Spark. The format defines a convention that lets you save a model in different “flavors” that can be understood by different downstream tools.

Table of Contents

Storage Format

Each MLflow Model is a directory containing arbitrary files, together with an MLmodel

file in the root of the directory that can define multiple flavors that the model can be viewed

in.

The model aspect of the MLflow Model can either be a serialized object (e.g., a pickled scikit-learn model)

or a Python script (or notebook, if running in Databricks) that contains the model instance that has been defined

with the mlflow.models.set_model() API.

Flavors are the key concept that makes MLflow Models powerful: they are a convention that deployment

tools can use to understand the model, which makes it possible to write tools that work with models

from any ML library without having to integrate each tool with each library. MLflow defines

several “standard” flavors that all of its built-in deployment tools support, such as a “Python

function” flavor that describes how to run the model as a Python function. However, libraries can

also define and use other flavors. For example, MLflow’s mlflow.sklearn library allows

loading models back as a scikit-learn Pipeline object for use in code that is aware of

scikit-learn, or as a generic Python function for use in tools that just need to apply the model

(for example, the mlflow deployments tool with the option -t sagemaker for deploying models

to Amazon SageMaker).

MLmodel file

All of the flavors that a particular model supports are defined in its MLmodel file in YAML

format. For example, running python examples/sklearn_logistic_regression/train.py from MLflow repo

will create the following files under the model directory:

# Directory written by mlflow.sklearn.save_model(model, "model", input_example=...)

model/

├── MLmodel

├── model.pkl

├── conda.yaml

├── python_env.yaml

├── requirements.txt

├── input_example.json (optional, only logged when input example is provided and valid during model logging)

├── serving_input_example.json (optional, only logged when input example is provided and valid during model logging)

└── environment_variables.txt (optional, only logged when environment variables are used during model inference)

And its MLmodel file describes two flavors:

time_created: 2018-05-25T17:28:53.35

flavors:

sklearn:

sklearn_version: 0.19.1

pickled_model: model.pkl

python_function:

loader_module: mlflow.sklearn

Apart from a flavors field listing the model flavors, the MLmodel YAML format can contain the following fields:

time_created: Date and time when the model was created, in UTC ISO 8601 format.run_id: ID of the run that created the model, if the model was saved using MLflow Tracking.signature: model signature in JSON format.input_example: reference to an artifact with input example.databricks_runtime: Databricks runtime version and type, if the model was trained in a Databricks notebook or job.mlflow_version: The version of MLflow that was used to log the model.

Additional Logged Files

For environment recreation, we automatically log conda.yaml, python_env.yaml, and requirements.txt files whenever a model is logged.

These files can then be used to reinstall dependencies using conda or virtualenv with pip. Please see

How MLflow Model Records Dependencies for more details about these files.

If a model input example is provided when logging the model, two additional files input_example.json and serving_input_example.json are logged.

See Model Input Example for more details.

When logging a model, model metadata files (MLmodel, conda.yaml, python_env.yaml, requirements.txt) are copied to a subdirectory named metadata. For wheeled models, original_requirements.txt file is also copied.

Note

When a model registered in the MLflow Model Registry is downloaded, a YAML file named registered_model_meta is added to the model directory on the downloader’s side. This file contains the name and version of the model referenced in the MLflow Model Registry, and will be used for deployment and other purposes.

Attention

If you log a model within Databricks, MLflow also creates a metadata subdirectory within

the model directory. This subdirectory contains the lightweight copy of aforementioned

metadata files for internal use.

Environment variables file

MLflow records the environment variables that are used during model inference in environment_variables.txt file when logging a model.

Attention

environment_variables.txt file only contains names of the environment variables that are used during model inference,

values are not stored.

Currently MLflow only logs the environment variables whose name contains any of the following keywords:

RECORD_ENV_VAR_ALLOWLIST = {

# api key related

"API_KEY", # e.g. OPENAI_API_KEY

"API_TOKEN",

# databricks auth related

"DATABRICKS_HOST",

"DATABRICKS_USERNAME",

"DATABRICKS_PASSWORD",

"DATABRICKS_TOKEN",

"DATABRICKS_INSECURE",

"DATABRICKS_CLIENT_ID",

"DATABRICKS_CLIENT_SECRET",

"_DATABRICKS_WORKSPACE_HOST",

"_DATABRICKS_WORKSPACE_ID",

}

Example of a pyfunc model that uses environment variables:

import mlflow

import os

os.environ["TEST_API_KEY"] = "test_api_key"

class MyModel(mlflow.pyfunc.PythonModel):

def predict(self, context, model_input, params=None):

if os.environ.get("TEST_API_KEY"):

return model_input

raise Exception("API key not found")

with mlflow.start_run():

model_info = mlflow.pyfunc.log_model(

"model", python_model=MyModel(), input_example="data"

)

Environment variable TEST_API_KEY is logged in the environment_variables.txt file like below

# This file records environment variable names that are used during model inference.

# They might need to be set when creating a serving endpoint from this model.

# Note: it is not guaranteed that all environment variables listed here are required

TEST_API_KEY

Attention

Before you deploy a model to a serving endpoint, review the environment_variables.txt file to ensure all necessary environment variables for model inference are set. Note that not all environment variables listed in the file are always required for model inference. For detailed instructions on setting environment variables on a databricks serving endpoint, refer to this guidance.

Note

To disable this feature, set the environment variable MLFLOW_RECORD_ENV_VARS_IN_MODEL_LOGGING to false.

Managing Model Dependencies

An MLflow Model infers dependencies required for the model flavor and automatically logs them. However, it also allows you to define extra dependencies or custom Python code, and offer a tool to validate them in a sandbox environment. Please refer to Managing Dependencies in MLflow Models for more details.

Model Signatures And Input Examples

In MLflow, understanding the intricacies of model signatures and input examples is crucial for effective model management and deployment.

Model Signature: Defines the schema for model inputs, outputs, and additional inference parameters, promoting a standardized interface for model interaction.

Model Input Example: Provides a concrete instance of valid model input, aiding in understanding and testing model requirements. Additionally, if an input example is provided when logging a model, a model signature will be automatically inferred and stored if not explicitly provided.

Model Serving Payload Example: Provides a json payload example for querying a deployed model endpoint. If an input example is provided when logging a model, a serving paylod example is automatically generated from the input example and saved as

serving_input_example.json.

Our documentation delves into several key areas:

Supported Signature Types: We cover the different data types that are supported, such as tabular data for traditional machine learning models and tensors for deep learning models.

Signature Enforcement: Discusses how MLflow enforces schema compliance, ensuring that the provided inputs match the model’s expectations.

Logging Models with Signatures: Guides on how to incorporate signatures when logging models, enhancing clarity and reliability in model operations.

For a detailed exploration of these concepts, including examples and best practices, visit the Model Signatures and Examples Guide. If you would like to see signature enforcement in action, see the notebook tutorial on Model Signatures to learn more.

Model API

You can save and load MLflow Models in multiple ways. First, MLflow includes integrations with

several common libraries. For example, mlflow.sklearn contains

save_model, log_model,

and load_model functions for scikit-learn models. Second,

you can use the mlflow.models.Model class to create and write models. This

class has four key functions:

add_flavorto add a flavor to the model. Each flavor has a string name and a dictionary of key-value attributes, where the values can be any object that can be serialized to YAML.saveto save the model to a local directory.logto log the model as an artifact in the current run using MLflow Tracking.loadto load a model from a local directory or from an artifact in a previous run.

Models From Code

To learn more about the Models From Code feature, please visit the deep dive guide for more in-depth explanation and to see additional examples.

Note

The Models from Code feature is available in MLflow versions 2.12.2 and later. This feature is experimental and may change in future releases.

The Models from Code feature allows you to define and log models directly from a stand-alone python script. This feature is particularly useful when you want to

log models that can be effectively stored as a code representation (models that do not need optimized weights through training) or applications

that rely on external services (e.g., LangChain chains). Another benefit is that this approach entirely bypasses the use of the pickle or

cloudpickle modules within Python, which can carry security risks when loading untrusted models.

Note

This feature is only supported for LangChain, LlamaIndex, and PythonModel models.

In order to log a model from code, you can leverage the mlflow.models.set_model() API. This API allows you to define a model by specifying

an instance of the model class directly within the file where the model is defined. When logging such a model, a

file path is specified (instead of an object) that points to the Python file containing both the model class definition and the usage of the

set_model API applied on an instance of your custom model.

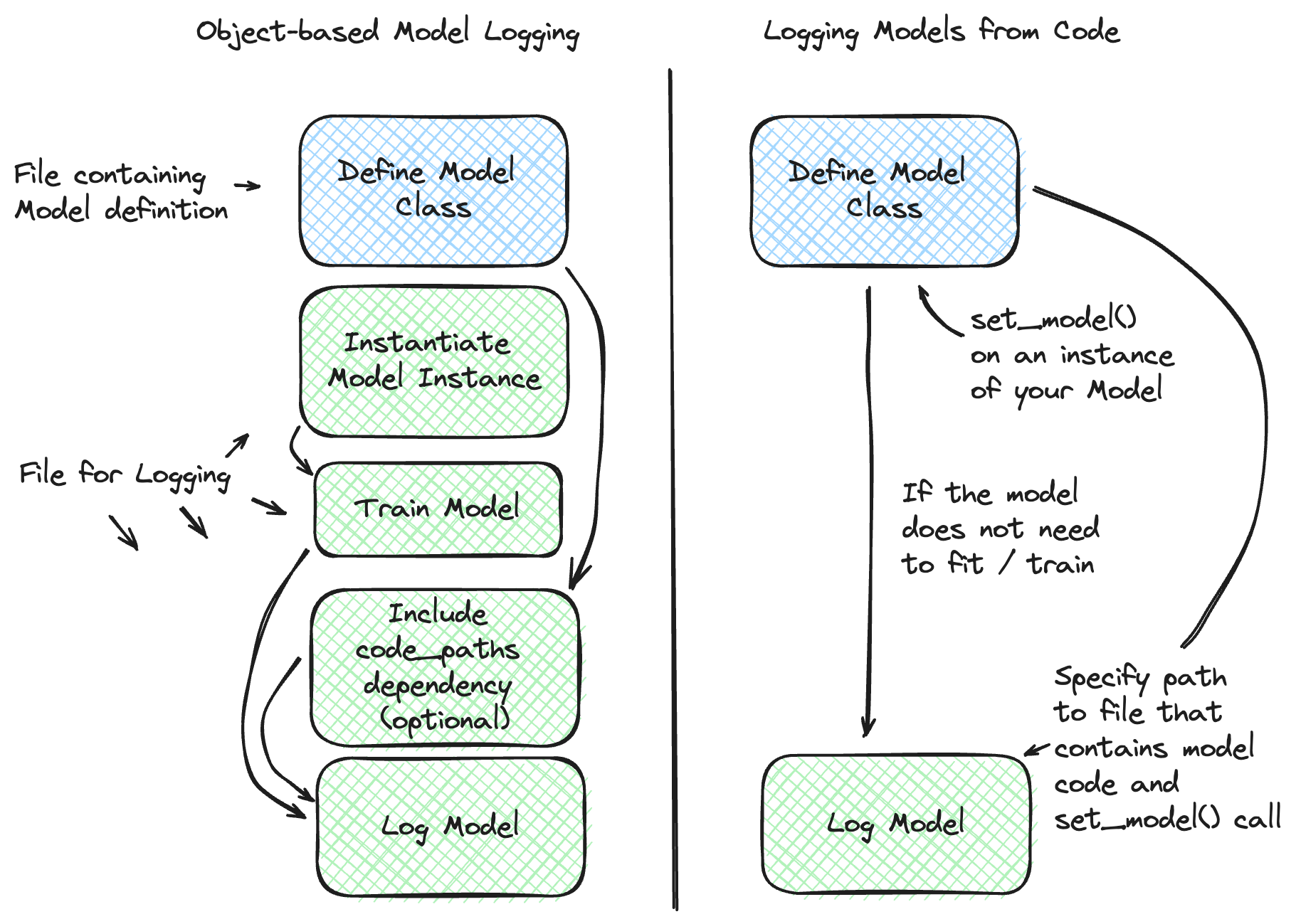

The figure below provides a comparison of the standard model logging process and the Models from Code feature for models that are eligible to be saved using the Models from Code feature:

For example, defining a model in a separate file named my_model.py:

import mlflow

from mlflow.models import set_model

class MyModel(mlflow.pyfunc.PythonModel):

def predict(self, context, model_input):

return model_input

# Define the custom PythonModel instance that will be used for inference

set_model(MyModel())

Note

The Models from code feature does not support capturing import statements that are from external file references. If you have dependencies that

are not captured via a pip install, dependencies will need to be included and resolved via appropriate absolute path import references from

using the code_paths feature.

For simplicity’s sake, it is recommended to encapsulate all of your required local dependencies for a model defined from code within the same

python script file due to limitations around code_paths dependency pathing resolution.

Tip

When defining a model from code and using the mlflow.models.set_model() API, the code that is defined in the script that is being logged

will be executed internally to ensure that it is valid code. If you have connections to external services within your script (e.g. you are connecting

to a GenAI service within LangChain), be aware that you will incur a connection request to that service when the model is being logged.

Then, logging the model from the file path in a different python script:

import mlflow

model_path = "my_model.py"

with mlflow.start_run():

model_info = mlflow.pyfunc.log_model(

python_model=model_path, # Define the model as the path to the Python file

artifact_path="my_model",

)

# Loading the model behaves exactly as if an instance of MyModel had been logged

my_model = mlflow.pyfunc.load_model(model_info.model_uri)

Warning

The mlflow.models.set_model() API is not threadsafe. Do not attempt to use this feature if you are logging models concurrently

from multiple threads. This fluent API utilizes a global active model state that has no consistency guarantees. If you are interested in threadsafe

logging APIs, please use the mlflow.client.MlflowClient APIs for logging models.

Built-In Model Flavors

MLflow provides several standard flavors that might be useful in your applications. Specifically, many of its deployment tools support these flavors, so you can export your own model in one of these flavors to benefit from all these tools:

Python Function (python_function)

The python_function model flavor serves as a default model interface for MLflow Python models.

Any MLflow Python model is expected to be loadable as a python_function model. This enables

other MLflow tools to work with any python model regardless of which persistence module or

framework was used to produce the model. This interoperability is very powerful because it allows

any Python model to be productionized in a variety of environments.

In addition, the python_function model flavor defines a generic filesystem model format for Python models and provides utilities for saving and loading models

to and from this format. The format is self-contained in the sense that it includes all the

information necessary to load and use a model. Dependencies are stored either directly with the

model or referenced via conda environment. This model format allows other tools to integrate

their models with MLflow.

How To Save Model As Python Function

Most python_function models are saved as part of other model flavors - for example, all mlflow

built-in flavors include the python_function flavor in the exported models. In addition, the

mlflow.pyfunc module defines functions for creating python_function models explicitly.

This module also includes utilities for creating custom Python models, which is a convenient way of

adding custom python code to ML models. For more information, see the custom Python models

documentation.

For information on how to store a custom model from a python script (models from code functionality), see the guide to models from code for the recommended approaches.

How To Load And Score Python Function Models

Loading Models

You can load python_function models in Python by using the mlflow.pyfunc.load_model() function. It is important

to note that load_model assumes all dependencies are already available and will not perform any checks or installations

of dependencies. For deployment options that handle dependencies, refer to the model deployment section.

Scoring Models

Once a model is loaded, it can be scored in two primary ways:

Synchronous Scoring The standard method for scoring is using the

predictmethod, which supports various input types and returns a scalar or collection based on the input data. The method signature is:predict(data: Union[pandas.Series, pandas.DataFrame, numpy.ndarray, csc_matrix, csr_matrix, List[Any], Dict[str, Any], str], params: Optional[Dict[str, Any]] = None) → Union[pandas.Series, pandas.DataFrame, numpy.ndarray, list, str]Synchronous Streaming Scoring

Note

predict_streamis a new interface that was added to MLflow in the 2.12.2 release. Previous versions of MLflow will not support this interface. In order to utilizepredict_streamin a custom Python Function Model, you must implement thepredict_streammethod in your model class and return a generator type.For models that support streaming data processing,

predict_streammethod is available. This method returns agenerator, which yields a stream of responses, allowing for efficient processing of large datasets or continuous data streams. Note that thepredict_streammethod is not available for all model types. The usage involves iterating over the generator to consume the responses:predict_stream(data: Any, params: Optional[Dict[str, Any]] = None) → GeneratorType

Demonstrating predict_stream()

Below is an example demonstrating how to define, save, load, and use a streamable model with the predict_stream() method:

import mlflow

import os

# Define a custom model that supports streaming

class StreamableModel(mlflow.pyfunc.PythonModel):

def predict(self, context, model_input, params=None):

# Regular predict method implementation (optional for this demo)

return "regular-predict-output"

def predict_stream(self, context, model_input, params=None):

# Yielding elements one at a time

for element in ["a", "b", "c", "d", "e"]:

yield element

# Save the model to a directory

tmp_path = "/tmp/test_model"

pyfunc_model_path = os.path.join(tmp_path, "pyfunc_model")

python_model = StreamableModel()

mlflow.pyfunc.save_model(path=pyfunc_model_path, python_model=python_model)

# Load the model

loaded_pyfunc_model = mlflow.pyfunc.load_model(model_uri=pyfunc_model_path)

# Use predict_stream to get a generator

stream_output = loaded_pyfunc_model.predict_stream("single-input")

# Consuming the generator using next

print(next(stream_output)) # Output: 'a'

print(next(stream_output)) # Output: 'b'

# Alternatively, consuming the generator using a for-loop

for response in stream_output:

print(response) # This will print 'c', 'd', 'e'

Python Function Model Interfaces

All PyFunc models will support pandas.DataFrame as an input. In addition to pandas.DataFrame, DL PyFunc models will also support tensor inputs in the form of numpy.ndarrays. To verify whether a model flavor supports tensor inputs, please check the flavor’s documentation.

For models with a column-based schema, inputs are typically provided in the form of a pandas.DataFrame. If a dictionary mapping column name to values is provided as input for schemas with named columns or if a python List or a numpy.ndarray is provided as input for schemas with unnamed columns, MLflow will cast the input to a DataFrame. Schema enforcement and casting with respect to the expected data types is performed against the DataFrame.

For models with a tensor-based schema, inputs are typically provided in the form of a numpy.ndarray or a dictionary mapping the tensor name to its np.ndarray value. Schema enforcement will check the provided input’s shape and type against the shape and type specified in the model’s schema and throw an error if they do not match.

For models where no schema is defined, no changes to the model inputs and outputs are made. MLflow will propagate any errors raised by the model if the model does not accept the provided input type.

The python environment that a PyFunc model is loaded into for prediction or inference may differ from the environment

in which it was trained. In the case of an environment mismatch, a warning message will be printed when calling

mlflow.pyfunc.load_model(). This warning statement will identify the packages that have a version mismatch

between those used during training and the current environment. In order to get the full dependencies of the

environment in which the model was trained, you can call mlflow.pyfunc.get_model_dependencies().

Furthermore, if you want to run model inference in the same environment used in model training, you can call

mlflow.pyfunc.spark_udf() with the env_manager argument set as “conda”. This will generate the environment

from the conda.yaml file, ensuring that the python UDF will execute with the exact package versions that were used

during training.

Some PyFunc models may accept model load configuration, which controls how the model is loaded and predictions computed. You can learn which configuration the model supports by inspecting the model’s flavor metadata:

model_info = mlflow.models.get_model_info(model_uri)

model_info.flavors[mlflow.pyfunc.FLAVOR_NAME][mlflow.pyfunc.MODEL_CONFIG]

Alternatively, you can load the PyFunc model and inspect the model_config property:

pyfunc_model = mlflow.pyfunc.load_model(model_uri)

pyfunc_model.model_config

Model configuration can be changed at loading time by indicating model_config parameter in the

mlflow.pyfunc.load_model() method:

pyfunc_model = mlflow.pyfunc.load_model(model_uri, model_config=dict(temperature=0.93))

When a model configuration value is changed, those values the configuration the model was saved with. Indicating an invalid model configuration key for a model results in that configuration being ignored. A warning is displayed mentioning the ignored entries.

Note

Model configuration vs parameters with default values in signatures: Use model configuration when you need to provide model publishers for a way to change how the model is loaded into memory and how predictions are computed for all the samples. For instance, a key like user_gpu. Model consumers are not able to change those values at predict time. Use parameters with default values in the signature to provide a users the ability to change how predictions are computed on each data sample.

R Function (crate)

The crate model flavor defines a generic model format for representing an arbitrary R prediction

function as an MLflow model using the crate function from the

carrier package. The prediction function is expected to take a dataframe as input and

produce a dataframe, a vector or a list with the predictions as output.

This flavor requires R to be installed in order to be used.

crate usage

For a minimal crate model, an example configuration for the predict function is:

library(mlflow)

library(carrier)

# Load iris dataset

data("iris")

# Learn simple linear regression model

model <- lm(Sepal.Width~Sepal.Length, data = iris)

# Define a crate model

# call package functions with an explicit :: namespace.

crate_model <- crate(

function(new_obs) stats::predict(model, data.frame("Sepal.Length" = new_obs)),

model = model

)

# log the model

model_path <- mlflow_log_model(model = crate_model, artifact_path = "iris_prediction")

# load the logged model and make a prediction

model_uri <- paste0(mlflow_get_run()$artifact_uri, "/iris_prediction")

mlflow_model <- mlflow_load_model(model_uri = model_uri,

flavor = NULL,

client = mlflow_client())

prediction <- mlflow_predict(model = mlflow_model, data = 5)

print(prediction)

H2O (h2o)

The h2o model flavor enables logging and loading H2O models.

The mlflow.h2o module defines save_model() and

log_model() methods in python, and

mlflow_save_model and

mlflow_log_model in R for saving H2O models in MLflow Model

format.

These methods produce MLflow Models with the python_function flavor, allowing you to load them

as generic Python functions for inference via mlflow.pyfunc.load_model().

This loaded PyFunc model can be scored with only DataFrame input. When you load

MLflow Models with the h2o flavor using mlflow.pyfunc.load_model(),

the h2o.init() method is

called. Therefore, the correct version of h2o(-py) must be installed in the loader’s

environment. You can customize the arguments given to

h2o.init() by modifying the

init entry of the persisted H2O model’s YAML configuration file: model.h2o/h2o.yaml.

Finally, you can use the mlflow.h2o.load_model() method to load MLflow Models with the

h2o flavor as H2O model objects.

For more information, see mlflow.h2o.

h2o pyfunc usage

For a minimal h2o model, here is an example of the pyfunc predict() method in a classification scenario :

import mlflow

import h2o

h2o.init()

from h2o.estimators.glm import H2OGeneralizedLinearEstimator

# import the prostate data

df = h2o.import_file(

"http://s3.amazonaws.com/h2o-public-test-data/smalldata/prostate/prostate.csv.zip"

)

# convert the columns to factors

df["CAPSULE"] = df["CAPSULE"].asfactor()

df["RACE"] = df["RACE"].asfactor()

df["DCAPS"] = df["DCAPS"].asfactor()

df["DPROS"] = df["DPROS"].asfactor()

# split the data

train, test, valid = df.split_frame(ratios=[0.7, 0.15])

# generate a GLM model

glm_classifier = H2OGeneralizedLinearEstimator(

family="binomial", lambda_=0, alpha=0.5, nfolds=5, compute_p_values=True

)

with mlflow.start_run():

glm_classifier.train(

y="CAPSULE", x=["AGE", "RACE", "VOL", "GLEASON"], training_frame=train

)

metrics = glm_classifier.model_performance()

metrics_to_track = ["MSE", "RMSE", "r2", "logloss"]

metrics_to_log = {

key: value

for key, value in metrics._metric_json.items()

if key in metrics_to_track

}

params = glm_classifier.params

mlflow.log_params(params)

mlflow.log_metrics(metrics_to_log)

model_info = mlflow.h2o.log_model(glm_classifier, artifact_path="h2o_model_info")

# load h2o model and make a prediction

h2o_pyfunc = mlflow.pyfunc.load_model(model_uri=model_info.model_uri)

test_df = test.as_data_frame()

predictions = h2o_pyfunc.predict(test_df)

print(predictions)

# it is also possible to load the model and predict using h2o methods on the h2o frame

# h2o_model = mlflow.h2o.load_model(model_info.model_uri)

# predictions = h2o_model.predict(test)

Keras (keras)

The keras model flavor enables logging and loading Keras models. It is available in both Python

and R clients. In R, you can save or log the model using

mlflow_save_model and mlflow_log_model.

These functions serialize Keras models as HDF5 files using the Keras library’s built-in

model persistence functions. You can use

mlflow_load_model function in R to load MLflow Models

with the keras flavor as Keras Model objects.

Keras pyfunc usage

For a minimal Sequential model, an example configuration for the pyfunc predict() method is:

import mlflow

import numpy as np

import pathlib

import shutil

from tensorflow import keras

mlflow.tensorflow.autolog()

X = np.array([-2, -1, 0, 1, 2, 1]).reshape(-1, 1)

y = np.array([0, 0, 1, 1, 1, 0])

model = keras.Sequential(

[

keras.Input(shape=(1,)),

keras.layers.Dense(1, activation="sigmoid"),

]

)

model.compile(loss="binary_crossentropy", optimizer="adam", metrics=["accuracy"])

model.fit(X, y, batch_size=3, epochs=5, validation_split=0.2)

local_artifact_dir = "/tmp/mlflow/keras_model"

pathlib.Path(local_artifact_dir).mkdir(parents=True, exist_ok=True)

model_uri = f"runs:/{mlflow.last_active_run().info.run_id}/model"

keras_pyfunc = mlflow.pyfunc.load_model(

model_uri=model_uri, dst_path=local_artifact_dir

)

data = np.array([-4, 1, 0, 10, -2, 1]).reshape(-1, 1)

predictions = keras_pyfunc.predict(data)

shutil.rmtree(local_artifact_dir)

MLeap (mleap)

Warning

The mleap model flavor is deprecated as of MLflow 2.6.0 and will be removed in a future release.

The mleap model flavor supports saving Spark models in MLflow format using the

MLeap persistence mechanism. MLeap is an inference-optimized

format and execution engine for Spark models that does not depend on

SparkContext

to evaluate inputs.

Note

You can save Spark models in MLflow format with the mleap flavor by specifying the

sample_input argument of the mlflow.spark.save_model() or

mlflow.spark.log_model() method (recommended). For more details see Spark MLlib.

The mlflow.mleap module also

defines save_model() and

log_model() methods for saving MLeap models in MLflow format,

but these methods do not include the python_function flavor in the models they produce.

Similarly, mleap models can be saved in R with mlflow_save_model and loaded with mlflow_load_model, with

mlflow_save_model requiring sample_input to be specified as a

sample Spark dataframe containing input data to the model is required by MLeap for data schema

inference.

A companion module for loading MLflow Models with the MLeap flavor is available in the

mlflow/java package.

For more information, see mlflow.spark, mlflow.mleap, and the

MLeap documentation.

PyTorch (pytorch)

The pytorch model flavor enables logging and loading PyTorch models.

The mlflow.pytorch module defines utilities for saving and loading MLflow Models with the

pytorch flavor. You can use the mlflow.pytorch.save_model() and

mlflow.pytorch.log_model() methods to save PyTorch models in MLflow format; both of these

functions use the torch.save() method to

serialize PyTorch models. Additionally, you can use the mlflow.pytorch.load_model()

method to load MLflow Models with the pytorch flavor as PyTorch model objects. This loaded

PyFunc model can be scored with both DataFrame input and numpy array input. Finally, models

produced by mlflow.pytorch.save_model() and mlflow.pytorch.log_model() contain

the python_function flavor, allowing you to load them as generic Python functions for inference

via mlflow.pyfunc.load_model().

Note

When using the PyTorch flavor, if a GPU is available at prediction time, the default GPU will be used to run inference. To disable this behavior, users can use the MLFLOW_DEFAULT_PREDICTION_DEVICE or pass in a device with the device parameter for the predict function.

Note

In case of multi gpu training, ensure to save the model only with global rank 0 gpu. This avoids logging multiple copies of the same model.

PyTorch pyfunc usage

For a minimal PyTorch model, an example configuration for the pyfunc predict() method is:

import numpy as np

import mlflow

from mlflow.models import infer_signature

import torch

from torch import nn

net = nn.Linear(6, 1)

loss_function = nn.L1Loss()

optimizer = torch.optim.Adam(net.parameters(), lr=1e-4)

X = torch.randn(6)

y = torch.randn(1)

epochs = 5

for epoch in range(epochs):

optimizer.zero_grad()

outputs = net(X)

loss = loss_function(outputs, y)

loss.backward()

optimizer.step()

with mlflow.start_run() as run:

signature = infer_signature(X.numpy(), net(X).detach().numpy())

model_info = mlflow.pytorch.log_model(net, "model", signature=signature)

pytorch_pyfunc = mlflow.pyfunc.load_model(model_uri=model_info.model_uri)

predictions = pytorch_pyfunc.predict(torch.randn(6).numpy())

print(predictions)

For more information, see mlflow.pytorch.

Scikit-learn (sklearn)

The sklearn model flavor provides an easy-to-use interface for saving and loading scikit-learn

models. The mlflow.sklearn module defines

save_model() and

log_model() functions that save scikit-learn models in

MLflow format, using either Python’s pickle module (Pickle) or CloudPickle for model serialization.

These functions produce MLflow Models with the python_function flavor, allowing them to

be loaded as generic Python functions for inference via mlflow.pyfunc.load_model().

This loaded PyFunc model can only be scored with DataFrame input. Finally, you can use the

mlflow.sklearn.load_model() method to load MLflow Models with the sklearn flavor as

scikit-learn model objects.

Scikit-learn pyfunc usage

For a Scikit-learn LogisticRegression model, an example configuration for the pyfunc predict() method is:

import mlflow

from mlflow.models import infer_signature

import numpy as np

from sklearn.linear_model import LogisticRegression

with mlflow.start_run():

X = np.array([-2, -1, 0, 1, 2, 1]).reshape(-1, 1)

y = np.array([0, 0, 1, 1, 1, 0])

lr = LogisticRegression()

lr.fit(X, y)

signature = infer_signature(X, lr.predict(X))

model_info = mlflow.sklearn.log_model(

sk_model=lr, artifact_path="model", signature=signature

)

sklearn_pyfunc = mlflow.pyfunc.load_model(model_uri=model_info.model_uri)

data = np.array([-4, 1, 0, 10, -2, 1]).reshape(-1, 1)

predictions = sklearn_pyfunc.predict(data)

For more information, see mlflow.sklearn.

Spark MLlib (spark)

The spark model flavor enables exporting Spark MLlib models as MLflow Models.

The mlflow.spark module defines

save_model()to save a Spark MLlib model to a DBFS path.log_model()to upload a Spark MLlib model to the tracking server.mlflow.spark.load_model()to load MLflow Models with thesparkflavor as Spark MLlib pipelines.

MLflow Models produced by these functions contain the python_function flavor,

allowing you to load them as generic Python functions via mlflow.pyfunc.load_model().

This loaded PyFunc model can only be scored with DataFrame input.

When a model with the spark flavor is loaded as a Python function via

mlflow.pyfunc.load_model(), a new

SparkContext

is created for model inference; additionally, the function converts all Pandas DataFrame inputs to

Spark DataFrames before scoring. While this initialization overhead and format translation latency

is not ideal for high-performance use cases, it enables you to easily deploy any

MLlib PipelineModel to any production environment supported by MLflow

(SageMaker, AzureML, etc).

Spark MLlib pyfunc usage

from pyspark.ml.classification import LogisticRegression

from pyspark.ml.linalg import Vectors

from pyspark.sql import SparkSession

import mlflow

# Prepare training data from a list of (label, features) tuples.

spark = SparkSession.builder.appName("LogisticRegressionExample").getOrCreate()

training = spark.createDataFrame(

[

(1.0, Vectors.dense([0.0, 1.1, 0.1])),

(0.0, Vectors.dense([2.0, 1.0, -1.0])),

(0.0, Vectors.dense([2.0, 1.3, 1.0])),

(1.0, Vectors.dense([0.0, 1.2, -0.5])),

],

["label", "features"],

)

# Create and fit a LogisticRegression instance

lr = LogisticRegression(maxIter=10, regParam=0.01)

lr_model = lr.fit(training)

# Serialize the Model

with mlflow.start_run():

model_info = mlflow.spark.log_model(lr_model, "spark-model")

# Load saved model

lr_model_saved = mlflow.pyfunc.load_model(model_info.model_uri)

# Make predictions on test data.

# The DataFrame used in the predict method must be a Pandas DataFrame

test = spark.createDataFrame(

[

(1.0, Vectors.dense([-1.0, 1.5, 1.3])),

(0.0, Vectors.dense([3.0, 2.0, -0.1])),

(1.0, Vectors.dense([0.0, 2.2, -1.5])),

],

["label", "features"],

).toPandas()

prediction = lr_model_saved.predict(test)

Note

Note that when the sample_input parameter is provided to log_model() or

save_model(), the Spark model is automatically saved as an mleap flavor

by invoking mlflow.mleap.add_to_model().

For example, the follow code block:

training_df = spark.createDataFrame([

(0, "a b c d e spark", 1.0),

(1, "b d", 0.0),

(2, "spark f g h", 1.0),

(3, "hadoop mapreduce", 0.0) ], ["id", "text", "label"])

tokenizer = Tokenizer(inputCol="text", outputCol="words")

hashingTF = HashingTF(inputCol=tokenizer.getOutputCol(), outputCol="features")

lr = LogisticRegression(maxIter=10, regParam=0.001)

pipeline = Pipeline(stages=[tokenizer, hashingTF, lr])

model = pipeline.fit(training_df)

mlflow.spark.log_model(model, "spark-model", sample_input=training_df)

results in the following directory structure logged to the MLflow Experiment:

# Directory written by with the addition of mlflow.mleap.add_to_model(model, "spark-model", training_df)

# Note the addition of the mleap directory

spark-model/

├── mleap

├── sparkml

├── MLmodel

├── conda.yaml

├── python_env.yaml

└── requirements.txt

For more information, see mlflow.mleap.

For more information, see mlflow.spark.

TensorFlow (tensorflow)

The simple example below shows how to log params and metrics in mlflow for a custom training loop

using low-level TensorFlow API. See tf-keras-example. for an example of mlflow and tf.keras models.

import numpy as np

import tensorflow as tf

import mlflow

x = np.linspace(-4, 4, num=512)

y = 3 * x + 10

# estimate w and b where y = w * x + b

learning_rate = 0.1

x_train = tf.Variable(x, trainable=False, dtype=tf.float32)

y_train = tf.Variable(y, trainable=False, dtype=tf.float32)

# initial values

w = tf.Variable(1.0)

b = tf.Variable(1.0)

with mlflow.start_run():

mlflow.log_param("learning_rate", learning_rate)

for i in range(1000):

with tf.GradientTape(persistent=True) as tape:

# calculate MSE = 0.5 * (y_predict - y_train)^2

y_predict = w * x_train + b

loss = 0.5 * tf.reduce_mean(tf.square(y_predict - y_train))

mlflow.log_metric("loss", value=loss.numpy(), step=i)

# Update the trainable variables

# w = w - learning_rate * gradient of loss function w.r.t. w

# b = b - learning_rate * gradient of loss function w.r.t. b

w.assign_sub(learning_rate * tape.gradient(loss, w))

b.assign_sub(learning_rate * tape.gradient(loss, b))

print(f"W = {w.numpy():.2f}, b = {b.numpy():.2f}")

ONNX (onnx)

The onnx model flavor enables logging of ONNX models in MLflow format via

the mlflow.onnx.save_model() and mlflow.onnx.log_model() methods. These

methods also add the python_function flavor to the MLflow Models that they produce, allowing the

models to be interpreted as generic Python functions for inference via

mlflow.pyfunc.load_model(). This loaded PyFunc model can be scored with

both DataFrame input and numpy array input. The python_function representation of an MLflow

ONNX model uses the ONNX Runtime execution engine for

evaluation. Finally, you can use the mlflow.onnx.load_model() method to load MLflow

Models with the onnx flavor in native ONNX format.

For more information, see mlflow.onnx and http://onnx.ai/.

Warning

The default behavior for saving ONNX files is to use the ONNX save option save_as_external_data=True

in order to support model files that are in excess of 2GB. For edge deployments of small model files, this

may create issues. If you need to save a small model as a single file for such deployment considerations,

you can set the parameter save_as_external_data=False in either mlflow.onnx.save_model() or

mlflow.onnx.log_model() to force the serialization of the model as a small file. Note that if the

model is in excess of 2GB, saving as a single file will not work.

ONNX pyfunc usage example

For an ONNX model, an example configuration that uses pytorch to train a dummy model, converts it to ONNX, logs to mlflow and makes a prediction using pyfunc predict() method is:

import numpy as np

import mlflow

from mlflow.models import infer_signature

import onnx

import torch

from torch import nn

# define a torch model

net = nn.Linear(6, 1)

loss_function = nn.L1Loss()

optimizer = torch.optim.Adam(net.parameters(), lr=1e-4)

X = torch.randn(6)

y = torch.randn(1)

# run model training

epochs = 5

for epoch in range(epochs):

optimizer.zero_grad()

outputs = net(X)

loss = loss_function(outputs, y)

loss.backward()

optimizer.step()

# convert model to ONNX and load it

torch.onnx.export(net, X, "model.onnx")

onnx_model = onnx.load_model("model.onnx")

# log the model into a mlflow run

with mlflow.start_run():

signature = infer_signature(X.numpy(), net(X).detach().numpy())

model_info = mlflow.onnx.log_model(onnx_model, "model", signature=signature)

# load the logged model and make a prediction

onnx_pyfunc = mlflow.pyfunc.load_model(model_info.model_uri)

predictions = onnx_pyfunc.predict(X.numpy())

print(predictions)

XGBoost (xgboost)

The xgboost model flavor enables logging of XGBoost models

in MLflow format via the mlflow.xgboost.save_model() and mlflow.xgboost.log_model() methods in python and mlflow_save_model and mlflow_log_model in R respectively.

These methods also add the python_function flavor to the MLflow Models that they produce, allowing the

models to be interpreted as generic Python functions for inference via

mlflow.pyfunc.load_model(). This loaded PyFunc model can only be scored with DataFrame input.

You can also use the mlflow.xgboost.load_model()

method to load MLflow Models with the xgboost model flavor in native XGBoost format.

Note that the xgboost model flavor only supports an instance of xgboost.Booster,

not models that implement the scikit-learn API.

XGBoost pyfunc usage

The example below

Loads the IRIS dataset from

scikit-learnTrains an XGBoost Classifier

Logs the model and params using

mlflowLoads the logged model and makes predictions

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from xgboost import XGBClassifier

import mlflow

from mlflow.models import infer_signature

data = load_iris()

X_train, X_test, y_train, y_test = train_test_split(

data["data"], data["target"], test_size=0.2

)

xgb_classifier = XGBClassifier(

n_estimators=10,

max_depth=3,

learning_rate=1,

objective="binary:logistic",

random_state=123,

)

# log fitted model and XGBClassifier parameters

with mlflow.start_run():

xgb_classifier.fit(X_train, y_train)

clf_params = xgb_classifier.get_xgb_params()

mlflow.log_params(clf_params)

signature = infer_signature(X_train, xgb_classifier.predict(X_train))

model_info = mlflow.xgboost.log_model(

xgb_classifier, "iris-classifier", signature=signature

)

# Load saved model and make predictions

xgb_classifier_saved = mlflow.pyfunc.load_model(model_info.model_uri)

y_pred = xgb_classifier_saved.predict(X_test)

For more information, see mlflow.xgboost.

LightGBM (lightgbm)

The lightgbm model flavor enables logging of LightGBM models

in MLflow format via the mlflow.lightgbm.save_model() and mlflow.lightgbm.log_model() methods.

These methods also add the python_function flavor to the MLflow Models that they produce, allowing the

models to be interpreted as generic Python functions for inference via

mlflow.pyfunc.load_model(). You can also use the mlflow.lightgbm.load_model()

method to load MLflow Models with the lightgbm model flavor in native LightGBM format.

Note that the scikit-learn API for LightGBM is now supported. For more information, see mlflow.lightgbm.

LightGBM pyfunc usage

The example below

Loads the IRIS dataset from

scikit-learnTrains a LightGBM

LGBMClassifierLogs the model and feature importance’s using

mlflowLoads the logged model and makes predictions

from lightgbm import LGBMClassifier

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

import mlflow

from mlflow.models import infer_signature

data = load_iris()

# Remove special characters from feature names to be able to use them as keys for mlflow metrics

feature_names = [

name.replace(" ", "_").replace("(", "").replace(")", "")

for name in data["feature_names"]

]

X_train, X_test, y_train, y_test = train_test_split(

data["data"], data["target"], test_size=0.2

)

# create model instance

lgb_classifier = LGBMClassifier(

n_estimators=10,

max_depth=3,

learning_rate=1,

objective="binary:logistic",

random_state=123,

)

# Fit and save model and LGBMClassifier feature importances as mlflow metrics

with mlflow.start_run():

lgb_classifier.fit(X_train, y_train)

feature_importances = dict(zip(feature_names, lgb_classifier.feature_importances_))

feature_importance_metrics = {

f"feature_importance_{feature_name}": imp_value

for feature_name, imp_value in feature_importances.items()

}

mlflow.log_metrics(feature_importance_metrics)

signature = infer_signature(X_train, lgb_classifier.predict(X_train))

model_info = mlflow.lightgbm.log_model(

lgb_classifier, "iris-classifier", signature=signature

)

# Load saved model and make predictions

lgb_classifier_saved = mlflow.pyfunc.load_model(model_info.model_uri)

y_pred = lgb_classifier_saved.predict(X_test)

print(y_pred)

CatBoost (catboost)

The catboost model flavor enables logging of CatBoost models

in MLflow format via the mlflow.catboost.save_model() and mlflow.catboost.log_model() methods.

These methods also add the python_function flavor to the MLflow Models that they produce, allowing the

models to be interpreted as generic Python functions for inference via

mlflow.pyfunc.load_model(). You can also use the mlflow.catboost.load_model()

method to load MLflow Models with the catboost model flavor in native CatBoost format.

For more information, see mlflow.catboost.

CatBoost pyfunc usage

For a CatBoost Classifier model, an example configuration for the pyfunc predict() method is:

import mlflow

from mlflow.models import infer_signature

from catboost import CatBoostClassifier

from sklearn import datasets

# prepare data

X, y = datasets.load_wine(as_frame=False, return_X_y=True)

# train the model

model = CatBoostClassifier(

iterations=5,

loss_function="MultiClass",

allow_writing_files=False,

)

model.fit(X, y)

# create model signature

predictions = model.predict(X)

signature = infer_signature(X, predictions)

# log the model into a mlflow run

with mlflow.start_run():

model_info = mlflow.catboost.log_model(model, "model", signature=signature)

# load the logged model and make a prediction

catboost_pyfunc = mlflow.pyfunc.load_model(model_uri=model_info.model_uri)

print(catboost_pyfunc.predict(X[:5]))

Spacy(spaCy)

The spaCy model flavor enables logging of spaCy models in MLflow format via

the mlflow.spacy.save_model() and mlflow.spacy.log_model() methods. Additionally, these

methods add the python_function flavor to the MLflow Models that they produce, allowing the models to be

interpreted as generic Python functions for inference via mlflow.pyfunc.load_model().

This loaded PyFunc model can only be scored with DataFrame input. You can

also use the mlflow.spacy.load_model() method to load MLflow Models with the spacy model flavor

in native spaCy format.

For more information, see mlflow.spacy.

Spacy pyfunc usage

The example below shows how to train a Spacy TextCategorizer model, log the model artifact and metrics to the

mlflow tracking server and then load the saved model to make predictions. For this example, we will be using the

Polarity 2.0 dataset available in the nltk package. This dataset consists of 10000 positive and 10000 negative

short movie reviews.

First we convert the texts and sentiment labels (“pos” or “neg”) from NLTK native format to Spacy’s DocBin format:

import pandas as pd

import spacy

from nltk.corpus import movie_reviews

from spacy import Language

from spacy.tokens import DocBin

nltk.download("movie_reviews")

def get_sentences(sentiment_type: str) -> pd.DataFrame:

"""Reconstruct the sentences from the word lists for each review record for a specific ``sentiment_type``

as a pandas DataFrame with two columns: 'sentence' and 'sentiment'.

"""

file_ids = movie_reviews.fileids(sentiment_type)

sent_df = []

for file_id in file_ids:

sentence = " ".join(movie_reviews.words(file_id))

sent_df.append({"sentence": sentence, "sentiment": sentiment_type})

return pd.DataFrame(sent_df)

def convert(data_df: pd.DataFrame, target_file: str):

"""Convert a DataFrame with 'sentence' and 'sentiment' columns to a

spacy DocBin object and save it to 'target_file'.

"""

nlp = spacy.blank("en")

sentiment_labels = data_df.sentiment.unique()

spacy_doc = DocBin()

for _, row in data_df.iterrows():

sent_tokens = nlp.make_doc(row["sentence"])

# To train a Spacy TextCategorizer model, the label must be attached to the "cats" dictionary of the "Doc"

# object, e.g. {"pos": 1.0, "neg": 0.0} for a "pos" label.

for label in sentiment_labels:

sent_tokens.cats[label] = 1.0 if label == row["sentiment"] else 0.0

spacy_doc.add(sent_tokens)

spacy_doc.to_disk(target_file)

# Build a single DataFrame with both positive and negative reviews, one row per review

review_data = [get_sentences(sentiment_type) for sentiment_type in ("pos", "neg")]

review_data = pd.concat(review_data, axis=0)

# Split the DataFrame into a train and a dev set

train_df = review_data.groupby("sentiment", group_keys=False).apply(

lambda x: x.sample(frac=0.7, random_state=100)

)

dev_df = review_data.loc[review_data.index.difference(train_df.index), :]

# Save the train and dev data files to the current directory as "corpora.train" and "corpora.dev", respectively

convert(train_df, "corpora.train")

convert(dev_df, "corpora.dev")

To set up the training job, we first need to generate a configuration file as described in the Spacy Documentation

For simplicity, we will only use a TextCategorizer in the pipeline.

python -m spacy init config --pipeline textcat --lang en mlflow-textcat.cfg

Change the default train and dev paths in the config file to the current directory:

[paths]

- train = null

- dev = null

+ train = "."

+ dev = "."

In Spacy, the training loop is defined internally in Spacy’s code. Spacy provides a “logging” extension point where

we can use mlflow. To do this,

We have to define a function to write metrics / model input to

mlfowRegister it as a logger in

Spacy’s component registryChange the default console logger in the

Spacy’s configuration file (mlflow-textcat.cfg)

from typing import IO, Callable, Tuple, Dict, Any, Optional

import spacy

from spacy import Language

import mlflow

@spacy.registry.loggers("mlflow_logger.v1")

def mlflow_logger():

"""Returns a function, ``setup_logger`` that returns two functions:

* ``log_step`` is called internally by Spacy for every evaluation step. We can log the intermediate train and

validation scores to the mlflow tracking server here.

* ``finalize``: is called internally by Spacy after training is complete. We can log the model artifact to the

mlflow tracking server here.

"""

def setup_logger(

nlp: Language,

stdout: IO = sys.stdout,

stderr: IO = sys.stderr,

) -> Tuple[Callable, Callable]:

def log_step(info: Optional[Dict[str, Any]]):

if info:

step = info["step"]

score = info["score"]

metrics = {}

for pipe_name in nlp.pipe_names:

loss = info["losses"][pipe_name]

metrics[f"{pipe_name}_loss"] = loss

metrics[f"{pipe_name}_score"] = score

mlflow.log_metrics(metrics, step=step)

def finalize():

uri = mlflow.spacy.log_model(nlp, "mlflow_textcat_example")

mlflow.end_run()

return log_step, finalize

return setup_logger

Check the spacy-loggers library <https://pypi.org/project/spacy-loggers/> _ for a more complete implementation.

Point to our mlflow logger in Spacy configuration file. For this example, we will lower the number of training steps

and eval frequency:

[training.logger]

- @loggers = "spacy.ConsoleLogger.v1"

- dev = null

+ @loggers = "mlflow_logger.v1"

[training]

- max_steps = 20000

- eval_frequency = 100

+ max_steps = 100

+ eval_frequency = 10

Train our model:

from spacy.cli.train import train as spacy_train

spacy_train("mlflow-textcat.cfg")

To make predictions, we load the saved model from the last run:

from mlflow import MlflowClient

# look up the last run info from mlflow

client = MlflowClient()

last_run = client.search_runs(experiment_ids=["0"], max_results=1)[0]

# We need to append the spacy model directory name to the artifact uri

spacy_model = mlflow.pyfunc.load_model(

f"{last_run.info.artifact_uri}/mlflow_textcat_example"

)

predictions_in = dev_df.loc[:, ["sentence"]]

predictions_out = spacy_model.predict(predictions_in).squeeze().tolist()

predicted_labels = [

"pos" if row["pos"] > row["neg"] else "neg" for row in predictions_out

]

print(dev_df.assign(predicted_sentiment=predicted_labels))

Fastai(fastai)

The fastai model flavor enables logging of fastai Learner models in MLflow format via

the mlflow.fastai.save_model() and mlflow.fastai.log_model() methods. Additionally, these

methods add the python_function flavor to the MLflow Models that they produce, allowing the models to be

interpreted as generic Python functions for inference via mlflow.pyfunc.load_model(). This loaded PyFunc model can

only be scored with DataFrame input. You can also use the mlflow.fastai.load_model() method to

load MLflow Models with the fastai model flavor in native fastai format.

The interface for utilizing a fastai model loaded as a pyfunc type for generating predictions uses a

Pandas DataFrame argument.

This example runs the fastai tabular tutorial,

logs the experiments, saves the model in fastai format and loads the model to get predictions

using a fastai data loader:

from fastai.data.external import URLs, untar_data

from fastai.tabular.core import Categorify, FillMissing, Normalize, TabularPandas

from fastai.tabular.data import TabularDataLoaders

from fastai.tabular.learner import tabular_learner

from fastai.data.transforms import RandomSplitter

from fastai.metrics import accuracy

from fastcore.basics import range_of

import pandas as pd

import mlflow

import mlflow.fastai

def print_auto_logged_info(r):

tags = {k: v for k, v in r.data.tags.items() if not k.startswith("mlflow.")}

artifacts = [

f.path for f in mlflow.MlflowClient().list_artifacts(r.info.run_id, "model")

]

print(f"run_id: {r.info.run_id}")

print(f"artifacts: {artifacts}")

print(f"params: {r.data.params}")

print(f"metrics: {r.data.metrics}")

print(f"tags: {tags}")

def main(epochs=5, learning_rate=0.01):

path = untar_data(URLs.ADULT_SAMPLE)

path.ls()

df = pd.read_csv(path / "adult.csv")

dls = TabularDataLoaders.from_csv(

path / "adult.csv",

path=path,

y_names="salary",

cat_names=[

"workclass",

"education",

"marital-status",

"occupation",

"relationship",

"race",

],

cont_names=["age", "fnlwgt", "education-num"],

procs=[Categorify, FillMissing, Normalize],

)

splits = RandomSplitter(valid_pct=0.2)(range_of(df))

to = TabularPandas(

df,

procs=[Categorify, FillMissing, Normalize],

cat_names=[

"workclass",

"education",

"marital-status",

"occupation",

"relationship",

"race",

],

cont_names=["age", "fnlwgt", "education-num"],

y_names="salary",

splits=splits,

)

dls = to.dataloaders(bs=64)

model = tabular_learner(dls, metrics=accuracy)

mlflow.fastai.autolog()

with mlflow.start_run() as run:

model.fit(5, 0.01)

mlflow.fastai.log_model(model, "model")

print_auto_logged_info(mlflow.get_run(run_id=run.info.run_id))

model_uri = f"runs:/{run.info.run_id}/model"

loaded_model = mlflow.fastai.load_model(model_uri)

test_df = df.copy()

test_df.drop(["salary"], axis=1, inplace=True)

dl = learn.dls.test_dl(test_df)

predictions, _ = loaded_model.get_preds(dl=dl)

px = pd.DataFrame(predictions).astype("float")

px.head(5)

main()

Output (Pandas DataFrame):

Index |

Probability of first class |

Probability of second class |

|---|---|---|

0 |

0.545088 |

0.454912 |

1 |

0.503172 |

0.496828 |

2 |

0.962663 |

0.037337 |

3 |

0.206107 |

0.793893 |

4 |

0.807599 |

0.192401 |

Alternatively, when using the python_function flavor, get predictions from a DataFrame.

from fastai.data.external import URLs, untar_data

from fastai.tabular.core import Categorify, FillMissing, Normalize, TabularPandas

from fastai.tabular.data import TabularDataLoaders

from fastai.tabular.learner import tabular_learner

from fastai.data.transforms import RandomSplitter

from fastai.metrics import accuracy

from fastcore.basics import range_of

import pandas as pd

import mlflow

import mlflow.fastai

model_uri = ...

path = untar_data(URLs.ADULT_SAMPLE)

df = pd.read_csv(path / "adult.csv")

test_df = df.copy()

test_df.drop(["salary"], axis=1, inplace=True)

loaded_model = mlflow.pyfunc.load_model(model_uri)

loaded_model.predict(test_df)

Output (Pandas DataFrame):

Index |

Probability of first class, Probability of second class |

|---|---|

0 |

[0.5450878, 0.45491222] |

1 |

[0.50317234, 0.49682766] |

2 |

[0.9626626, 0.037337445] |

3 |

[0.20610662, 0.7938934] |

4 |

[0.8075987, 0.19240129] |

For more information, see mlflow.fastai.

Statsmodels (statsmodels)

The statsmodels model flavor enables logging of Statsmodels models in MLflow format via the mlflow.statsmodels.save_model()

and mlflow.statsmodels.log_model() methods.

These methods also add the python_function flavor to the MLflow Models that they produce, allowing the

models to be interpreted as generic Python functions for inference via

mlflow.pyfunc.load_model(). This loaded PyFunc model can only be scored with DataFrame input.

You can also use the mlflow.statsmodels.load_model()

method to load MLflow Models with the statsmodels model flavor in native statsmodels format.

As for now, automatic logging is restricted to parameters, metrics and models generated by a call to fit

on a statsmodels model.

Statsmodels pyfunc usage

The following 2 examples illustrate usage of a basic regression model (OLS) and an ARIMA time series model from the following statsmodels apis : statsmodels.formula.api and statsmodels.tsa.api

For a minimal statsmodels regression model, here is an example of the pyfunc predict() method :

import mlflow

import pandas as pd

from sklearn.datasets import load_diabetes

import statsmodels.formula.api as smf

# load the diabetes dataset from sklearn

diabetes = load_diabetes()

# create X and y dataframes for the features and target

X = pd.DataFrame(data=diabetes.data, columns=diabetes.feature_names)

y = pd.DataFrame(data=diabetes.target, columns=["target"])

# concatenate X and y dataframes

df = pd.concat([X, y], axis=1)

# create the linear regression model (ordinary least squares)

model = smf.ols(

formula="target ~ age + sex + bmi + bp + s1 + s2 + s3 + s4 + s5 + s6", data=df

)

mlflow.statsmodels.autolog(

log_models=True,

disable=False,

exclusive=False,

disable_for_unsupported_versions=False,

silent=False,

registered_model_name=None,

)

with mlflow.start_run():

res = model.fit(method="pinv", use_t=True)

model_info = mlflow.statsmodels.log_model(res, artifact_path="OLS_model")

# load the pyfunc model

statsmodels_pyfunc = mlflow.pyfunc.load_model(model_uri=model_info.model_uri)

# generate predictions

predictions = statsmodels_pyfunc.predict(X)

print(predictions)

For a minimal time series ARIMA model, here is an example of the pyfunc predict() method :

import mlflow

import numpy as np

import pandas as pd

from statsmodels.tsa.arima.model import ARIMA

# create a time series dataset with seasonality

np.random.seed(0)

# generate a time index with a daily frequency

dates = pd.date_range(start="2022-12-01", end="2023-12-01", freq="D")

# generate the seasonal component (weekly)

seasonality = np.sin(np.arange(len(dates)) * (2 * np.pi / 365.25) * 7)

# generate the trend component

trend = np.linspace(-5, 5, len(dates)) + 2 * np.sin(

np.arange(len(dates)) * (2 * np.pi / 365.25) * 0.1

)

# generate the residual component

residuals = np.random.normal(0, 1, len(dates))

# generate the final time series by adding the components

time_series = seasonality + trend + residuals

# create a dataframe from the time series

data = pd.DataFrame({"date": dates, "value": time_series})

data.set_index("date", inplace=True)

order = (1, 0, 0)

# create the ARIMA model

model = ARIMA(data, order=order)

mlflow.statsmodels.autolog(

log_models=True,

disable=False,

exclusive=False,

disable_for_unsupported_versions=False,

silent=False,

registered_model_name=None,

)

with mlflow.start_run():

res = model.fit()

mlflow.log_params(

{

"order": order,

"trend": model.trend,

"seasonal_order": model.seasonal_order,

}

)

mlflow.log_params(res.params)

mlflow.log_metric("aic", res.aic)

mlflow.log_metric("bic", res.bic)

model_info = mlflow.statsmodels.log_model(res, artifact_path="ARIMA_model")

# load the pyfunc model

statsmodels_pyfunc = mlflow.pyfunc.load_model(model_uri=model_info.model_uri)

# prediction dataframes for a TimeSeriesModel must have exactly one row and include columns called start and end

start = pd.to_datetime("2024-01-01")

end = pd.to_datetime("2024-01-07")

# generate predictions

prediction_data = pd.DataFrame({"start": start, "end": end}, index=[0])

predictions = statsmodels_pyfunc.predict(prediction_data)

print(predictions)

For more information, see mlflow.statsmodels.

Prophet (prophet)

The prophet model flavor enables logging of Prophet models in MLflow format via the mlflow.prophet.save_model()

and mlflow.prophet.log_model() methods.

These methods also add the python_function flavor to the MLflow Models that they produce, allowing the

models to be interpreted as generic Python functions for inference via

mlflow.pyfunc.load_model(). This loaded PyFunc model can only be scored with DataFrame input.

You can also use the mlflow.prophet.load_model()

method to load MLflow Models with the prophet model flavor in native prophet format.

Prophet pyfunc usage

This example uses a time series dataset from Prophet’s GitHub repository, containing log number of daily views to Peyton Manning’s Wikipedia page for several years. A sample of the dataset is as follows:

ds |

y |

|---|---|

2007-12-10 |

9.59076113897809 |

2007-12-11 |

8.51959031601596 |

2007-12-12 |

8.18367658262066 |

2007-12-13 |

8.07246736935477 |

import numpy as np

import pandas as pd

from prophet import Prophet, serialize

from prophet.diagnostics import cross_validation, performance_metrics

import mlflow

from mlflow.models import infer_signature

# URL to the dataset

SOURCE_DATA = "https://raw.githubusercontent.com/facebook/prophet/main/examples/example_wp_log_peyton_manning.csv"

np.random.seed(12345)

def extract_params(pr_model):

params = {attr: getattr(pr_model, attr) for attr in serialize.SIMPLE_ATTRIBUTES}

return {k: v for k, v in params.items() if isinstance(v, (int, float, str, bool))}

# Load the training data

train_df = pd.read_csv(SOURCE_DATA)

# Create a "test" DataFrame with the "ds" column containing 10 days after the end date in train_df

test_dates = pd.date_range(start="2016-01-21", end="2016-01-31", freq="D")

test_df = pd.DataFrame({"ds": test_dates})

# Initialize Prophet model with specific parameters

prophet_model = Prophet(changepoint_prior_scale=0.5, uncertainty_samples=7)

with mlflow.start_run():

# Fit the model on the training data

prophet_model.fit(train_df)

# Extract and log model parameters

params = extract_params(prophet_model)

mlflow.log_params(params)

# Perform cross-validation

cv_results = cross_validation(

prophet_model,

initial="900 days",

period="30 days",

horizon="30 days",

parallel="threads",

disable_tqdm=True,

)

# Calculate and log performance metrics

cv_metrics = performance_metrics(cv_results, metrics=["mse", "rmse", "mape"])

average_metrics = cv_metrics.drop(columns=["horizon"]).mean(axis=0).to_dict()

mlflow.log_metrics(average_metrics)

# Generate predictions and infer model signature

train = prophet_model.history

# Log the Prophet model with MLflow

model_info = mlflow.prophet.log_model(

prophet_model,

artifact_path="prophet_model",

input_example=train[["ds"]].head(10),

)

# Load the saved model as a pyfunc

prophet_model_saved = mlflow.pyfunc.load_model(model_info.model_uri)

# Generate predictions for the test set

predictions = prophet_model_saved.predict(test_df)

# Truncate and display the forecast if needed

forecast = predictions[["ds", "yhat"]]

print(f"forecast:\n{forecast.head(5)}")

Output (Pandas DataFrame):

Index |

ds |

yhat |

yhat_upper |

yhat_lower |

|---|---|---|---|---|

0 |

2016-01-21 |

8.526513 |

8.827397 |

8.328563 |

1 |

2016-01-22 |

8.541355 |

9.434994 |

8.112758 |

2 |

2016-01-23 |

8.308332 |

8.633746 |

8.201323 |

3 |

2016-01-24 |

8.676326 |

9.534593 |

8.020874 |

4 |

2016-01-25 |

8.983457 |

9.430136 |

8.121798 |

For more information, see mlflow.prophet.

Pmdarima (pmdarima)

The pmdarima model flavor enables logging of pmdarima models in MLflow

format via the mlflow.pmdarima.save_model() and mlflow.pmdarima.log_model() methods.

These methods also add the python_function flavor to the MLflow Models that they produce, allowing the

model to be interpreted as generic Python functions for inference via mlflow.pyfunc.load_model().

This loaded PyFunc model can only be scored with a DataFrame input.

You can also use the mlflow.pmdarima.load_model() method to load MLflow Models with the pmdarima

model flavor in native pmdarima formats.

The interface for utilizing a pmdarima model loaded as a pyfunc type for generating forecast predictions uses

a single-row Pandas DataFrame configuration argument. The following columns in this configuration

Pandas DataFrame are supported:

n_periods(required) - specifies the number of future periods to generate starting from the last datetime valueof the training dataset, utilizing the frequency of the input training series when the model was trained. (for example, if the training data series elements represent one value per hour, in order to forecast 3 days of future data, set the column

n_periodsto72.

X(optional) - exogenous regressor values (only supported in pmdarima version >= 1.8.0) a 2D array of values forfuture time period events. For more information, read the underlying library explanation.

return_conf_int(optional) - a boolean (Default:False) for whether to return confidence interval values.See above note.

alpha(optional) - the significance value for calculating confidence intervals. (Default:0.05)

An example configuration for the pyfunc predict of a pmdarima model is shown below, with a future period

prediction count of 100, a confidence interval calculation generation, no exogenous regressor elements, and a default

alpha of 0.05:

Index |

n_periods |

return_conf_int |

|---|---|---|

0 |

100 |

True |

Warning

The Pandas DataFrame passed to a pmdarima pyfunc flavor must only contain 1 row.

Note

When predicting a pmdarima flavor, the predict method’s DataFrame configuration column

return_conf_int’s value controls the output format. When the column’s value is set to False or None

(which is the default if this column is not supplied in the configuration DataFrame), the schema of the

returned Pandas DataFrame is a single column: ["yhat"]. When set to True, the schema of the returned

DataFrame is: ["yhat", "yhat_lower", "yhat_upper"] with the respective lower (yhat_lower) and

upper (yhat_upper) confidence intervals added to the forecast predictions (yhat).

Example usage of pmdarima artifact loaded as a pyfunc with confidence intervals calculated:

import pmdarima

import mlflow

import pandas as pd

data = pmdarima.datasets.load_airpassengers()

with mlflow.start_run():

model = pmdarima.auto_arima(data, seasonal=True)

mlflow.pmdarima.save_model(model, "/tmp/model.pmd")

loaded_pyfunc = mlflow.pyfunc.load_model("/tmp/model.pmd")

prediction_conf = pd.DataFrame(

[{"n_periods": 4, "return_conf_int": True, "alpha": 0.1}]

)

predictions = loaded_pyfunc.predict(prediction_conf)

Output (Pandas DataFrame):

Index |

yhat |

yhat_lower |

yhat_upper |

|---|---|---|---|

0 |

467.573731 |

423.30995 |

511.83751 |

1 |

490.494467 |

416.17449 |

564.81444 |

2 |

509.138684 |

420.56255 |

597.71117 |

3 |

492.554714 |

397.30634 |

587.80309 |

Warning

Signature logging for pmdarima will not function correctly if return_conf_int is set to True from

a non-pyfunc artifact. The output of the native ARIMA.predict() when returning confidence intervals is not

a recognized signature type.

OpenAI (openai) (Experimental)

The full guide, including tutorials and detailed documentation for using the openai flavor can be viewed here.

LangChain (langchain) (Experimental)

The full guide, including tutorials and detailed documentation for using the langchain flavor can be viewed here.

John Snow Labs (johnsnowlabs) (Experimental)

Attention

The johnsnowlabs flavor is in active development and is marked as Experimental. Public APIs may change and new features are

subject to be added as additional functionality is brought to the flavor.

The johnsnowlabs model flavor gives you access to 20.000+ state-of-the-art enterprise NLP models in 200+ languages for medical, finance, legal and many more domains.

You can use mlflow.johnsnowlabs.log_model() to log and export your model as

mlflow.pyfunc.PyFuncModel.

This enables you to integrate any John Snow Labs model

into the MLflow framework. You can easily deploy your models for inference with MLflows serve functionalities.

Models are interpreted as a generic Python function for inference via mlflow.pyfunc.load_model().

You can also use the mlflow.johnsnowlabs.load_model() function to load a saved or logged MLflow

Model with the johnsnowlabs flavor from an stored artifact.

Features include: LLM’s, Text Summarization, Question Answering, Named Entity Recognition, Relation Extraction, Sentiment Analysis, Spell Checking, Image Classification, Automatic Speech Recognition and much more, powered by the latest Transformer Architectures. The models are provided by John Snow Labs and requires a John Snow Labs Enterprise NLP License. You can reach out to us for a research or industry license.

Example: Export a John Snow Labs to MLflow format

import json

import os

import pandas as pd

from johnsnowlabs import nlp

import mlflow

from mlflow.pyfunc import spark_udf

# 1) Write your raw license.json string into the 'JOHNSNOWLABS_LICENSE_JSON' env variable for MLflow

creds = {

"AWS_ACCESS_KEY_ID": "...",

"AWS_SECRET_ACCESS_KEY": "...",

"SPARK_NLP_LICENSE": "...",

"SECRET": "...",

}

os.environ["JOHNSNOWLABS_LICENSE_JSON"] = json.dumps(creds)

# 2) Install enterprise libraries

nlp.install()

# 3) Start a Spark session with enterprise libraries

spark = nlp.start()

# 4) Load a model and test it

nlu_model = "en.classify.bert_sequence.covid_sentiment"

model_save_path = "my_model"

johnsnowlabs_model = nlp.load(nlu_model)

johnsnowlabs_model.predict(["I hate COVID,", "I love COVID"])

# 5) Export model with pyfunc and johnsnowlabs flavors

with mlflow.start_run():

model_info = mlflow.johnsnowlabs.log_model(johnsnowlabs_model, model_save_path)

# 6) Load model with johnsnowlabs flavor

mlflow.johnsnowlabs.load_model(model_info.model_uri)

# 7) Load model with pyfunc flavor

mlflow.pyfunc.load_model(model_save_path)

pandas_df = pd.DataFrame({"text": ["Hello World"]})

spark_df = spark.createDataFrame(pandas_df).coalesce(1)

pyfunc_udf = spark_udf(

spark=spark,

model_uri=model_save_path,

env_manager="virtualenv",

result_type="string",

)

new_df = spark_df.withColumn("prediction", pyfunc_udf(*pandas_df.columns))

# 9) You can now use the mlflow models serve command to serve the model see next section

# 10) You can also use x command to deploy model inside of a container see next section

To deploy the John Snow Labs model as a container

Start the Docker Container

docker run -p 5001:8080 -e JOHNSNOWLABS_LICENSE_JSON=your_json_string "mlflow-pyfunc"

Query server

curl http://127.0.0.1:5001/invocations -H 'Content-Type: application/json' -d '{

"dataframe_split": {

"columns": ["text"],

"data": [["I hate covid"], ["I love covid"]]

}

}'

To deploy the John Snow Labs model without a container

Export env variable and start server

export JOHNSNOWLABS_LICENSE_JSON=your_json_string

mlflow models serve -m <model_uri>

Query server

curl http://127.0.0.1:5000/invocations -H 'Content-Type: application/json' -d '{

"dataframe_split": {

"columns": ["text"],

"data": [["I hate covid"], ["I love covid"]]

}

}'

Diviner (diviner)

The diviner model flavor enables logging of

diviner models in MLflow format via the

mlflow.diviner.save_model() and mlflow.diviner.log_model() methods. These methods also add the

python_function flavor to the MLflow Models that they produce, allowing the model to be interpreted as generic

Python functions for inference via mlflow.pyfunc.load_model().

This loaded PyFunc model can only be scored with a DataFrame input.

You can also use the mlflow.diviner.load_model() method to load MLflow Models with the diviner

model flavor in native diviner formats.

Diviner Types

Diviner is a library that provides an orchestration framework for performing time series forecasting on groups of

related series. Forecasting in diviner is accomplished through wrapping popular open source libraries such as

prophet and pmdarima. The diviner

library offers a simplified set of APIs to simultaneously generate distinct time series forecasts for multiple data

groupings using a single input DataFrame and a unified high-level API.

Metrics and Parameters logging for Diviner

Unlike other flavors that are supported in MLflow, Diviner has the concept of grouped models. As a collection of many

(perhaps thousands) of individual forecasting models, the burden to the tracking server to log individual metrics

and parameters for each of these models is significant. For this reason, metrics and parameters are exposed for

retrieval from Diviner’s APIs as Pandas DataFrames, rather than discrete primitive values.